Running a data center on earth has its challenges we all know. We challenge to deal with like electricity, heat, clean rooms, air conditioning, and humidity.

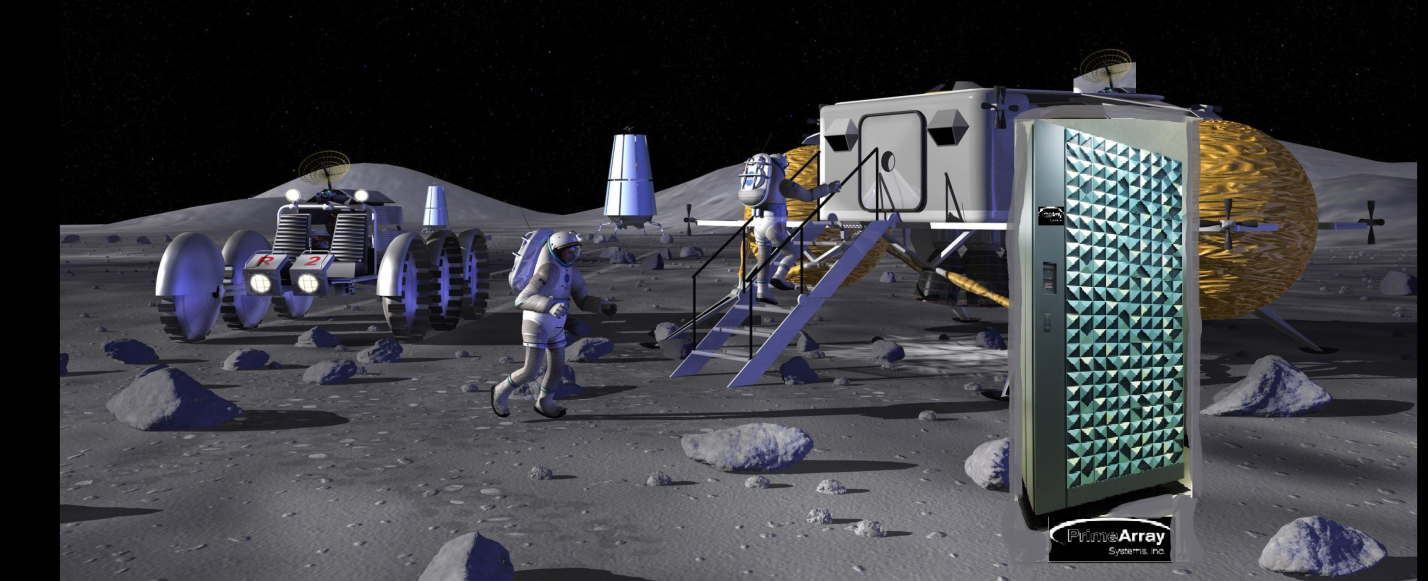

How would these electronics be fair in such different environments? Like in Space or on the Moon.

For the time being PrimeArray has you covered here on planet Earth but let’s explore the future…

If you haven’t followed the New Space Age closely, the next few decades may knock your socks off. Anticipated are a permanent presence on the moon, a commercial space station, private citizens traveling to orbit, space-based medical treatments, deep space travel, and a flurry of activity involving mining, producing, and exploiting space resources.

No one can be certain of the future, but if only a fraction of these projects come to pass, they will rely on large amounts of data. Data will be stored, accessed, and processed in space. And as data finds a home in space, we may also see more of Earth’s data moving into orbit.

How Would a Space Data Center Work?

Man could never have broken orbit without data storage – although he didn’t need much, compared to our present computing standards. The guidance computer on board Apollo 11 only needed 4KB of RAM and a 32 KB hard disk to land Neil Armstrong and Buzz Aldrin on the lunar surface. An Apple Watch Series 7, by comparison, has 1GB of RAM and 32GB of storage.

That’s not to say that space-bound computers haven’t caught up: the ISS’s supercomputer can operate in harsh environments and perform edge computing at teraflop speeds.

PrimeArray predicts that the future of space computing is less likely to focus on raw computing power than on distributed storage.

Space Doesn’t Like SSDs

The space-based system had 20 solid-state disk drives, of which nine failed over the course of the mission. With the Earth-based twins, only one drive had failed.

NASA wanted a system that would last at least three years – the time it would take to go to Mars and back. So HPE doubled the hardware; now there are four servers total, two in each locker.

Spaceborne Computer-2 includes the HPE Edgeline Converged EL4000 Edge System, a rugged server for harsher environments, paired with the industry standard HPE ProLiant DL360. The Edgeline 4000 includes a GPU for AI, machine learning, and image processing.

Data Centers in Space – 3 Stages

The deployment of mega-constellations and smallsats in Low Earth Orbit (LEO) is driving the need for satellite data centers. In this 3-part series, we cover how space systems follow terrestrial systems and evolve to enable AI at the edge:

Stage 1: Space Data Collection

- Thousands of LEO satellites collect data → sent to a few space data centers in LEO/MEO → buffered → long-term storage → downlinked to Earth for AI model generation.

Stage 2: Security & Latency

- Data may be public domain or national asset → must be secured → transmitted back via RF or lasers → future migration to GEO data centers.

Stage 3: The Ultimate Data Center in Space

Data centers move into space to mitigate power consumption & pollution. The EU’s ASCEND program is studying feasibility of space-based cloud data centers to reduce Earth’s carbon footprint. Digital infrastructure today consumes vast energy – e.g., EU/MEA data centers use 90 TWh/year and produce emissions equal to 27 million cars.

Space Is the Final Frontier

In 2021, the HPE Spaceborne Computer-2 (EL4000 + ProLiant with Nvidia T4 GPUs) became the first off-the-shelf server deployed in space to run production workloads.

Yet, elsewhere in space, most systems run decades-old tech – Mars landers, satellites, ISS (still using Intel 80286SX CPUs from the 1980s). Key systems rely on hardened, radiation-protected hardware.

Future: True High-Density Data Centers

- Selectors enable dense MRAM storage → allows space data centers in LEO/MEO to generate AI models in space → reduces dependency on Earth. Critical for Moon & Mars bases where backhauling data won’t be viable.

.webp)